Observability

AI Ops

Site Reliability Engineering

Dev Ops

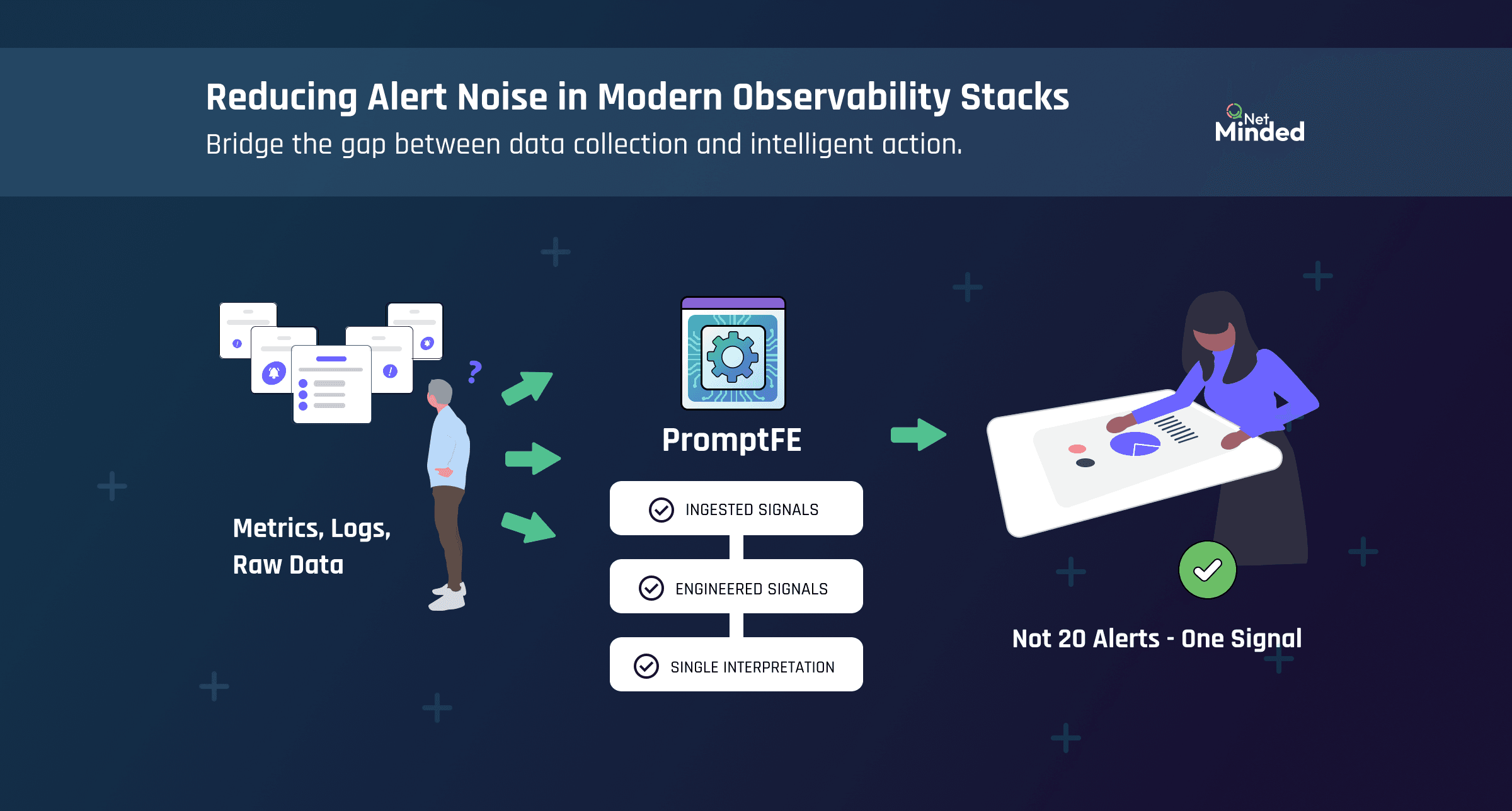

Reducing Alert Noise in Modern Observability Stacks

Nick Randall

April 9 • 6 Min Read

Bridge the gap between data collection and intelligent action.

Modern observability stacks are powerful.

With tools like Prometheus and OpenTelemetry, teams can collect:

Metrics

Logs

Traces

Events

At scale.

In real time.

So why does it still feel like this?

Too many alerts.

Not enough clarity.

The Problem: More Signals, Less Meaning

Most teams don’t suffer from a lack of data.

They suffer from:

Alert storms

Duplicate signals

Conflicting indicators

No clear root cause

A single issue can trigger:

Infrastructure alerts

Application alerts

Network alerts

All at once.

Each technically correct.

But collectively:

Unhelpful.

Why Alert Noise Happens

Observability tools report what they see.

They do not interpret it.

A typical setup might include:

Threshold-based alerts (CPU > 80%)

Rate-based alerts (error spikes)

Event-driven alerts (state changes)

Each one fires independently.

There is no shared understanding of:

What matters

What is related

What action is required

So the system produces noise.

Why AIOps Alone Doesn’t Fix It

AIOps platforms promise to reduce noise.

And they can help.

But they rely on the same inputs.

If those inputs are:

Fragmented

Ambiguous

Lacking context

Then AIOps has to guess.

And guesswork leads to:

False correlations

Missed issues

Low trust from teams

The Missing Layer: From Alerts to Meaning

What’s missing is not another tool.

It’s a signal layer.

A layer that:

Combines related telemetry

Applies domain knowledge

Produces a single, clear interpretation

Instead of dozens of alerts, you get:

Service State: Amber

Context: Error rate increase linked to upstream latency

Confidence: High

Now there is one signal.

Not twenty.

How PromptFE Works With Your Stack

Prompt Feature Engineering sits between telemetry and alerting.

Prometheus / OpenTelemetry → PromptFE → AIOps / Alerts / Automation

It does not replace your stack.

It enhances it.

1. Ingest existing signals

Metrics, logs, and traces flow in from:

Prometheus

OpenTelemetry

Other sources

No changes to collection.

2. Subject matter experts define meaning

Using a sandbox GUI, engineers define:

What normal looks like

What combinations indicate issues

What should trigger action

No code.

Just expertise.

3. Signals are combined and scored

PromptFE applies:

Correlation across signals

Trend analysis

Domain-specific logic

This creates a scoring profile.

A reusable model of judgement.

4. Outputs replace raw alerts

Instead of many alerts, you get:

A single state (Green / Amber / Red)

Clear context

Confidence levels

These outputs feed:

Alerting systems

AIOps platforms

Automation workflows

Why This Reduces Alert Noise

Because noise is not a volume problem.

It’s a meaning problem.

PromptFE reduces noise by:

Merging related signals

Removing duplicate alerts

Adding context upfront

So instead of reacting to alerts, teams act on:

Clear, engineered signals

Why This Improves AIOps

AIOps works best with structured input.

PromptFE provides:

Deterministic signals

Consistent interpretation

Explainable outputs

So AIOps no longer needs to infer meaning.

It receives it.

The Role of the Subject Matter Expert

This is critical.

PromptFE does not replace engineers.

It captures what they already do.

Today, experts:

Interpret dashboards

Correlate signals

Decide what matters

With PromptFE, they:

Define that logic once

Apply it consistently

Improve it over time

AI and automation run on their expertise.

Not instead of it.

The Bigger Shift

This is the transition:

From alerts → to signals

From signals → to meaning

From meaning → to action

The Bottom Line

Modern observability stacks are not broken.

They are incomplete.

They collect everything.

But they do not explain anything.

PromptFE adds the missing layer:

A consistent, explainable signal that both humans and AIOps can trust.

So instead of drowning in alerts, teams can focus on action.

Resources

Copyright NetMinded, a trading name of SeeThru Networks ©